Cameras inspired by bug eyes are used in real-time robotic sensing applications, helping robots accurately and carefully grasp objects in a cluttered scene.

For a robot put to work, the end of arm tooling — also known as the robot gripper — is one of the most important parts of the system. It is the physical interface between the robot arm and its job, be that garbage sorting, construction, or human interaction.

This is a difficult task for a robot. Humans have the benefit of two eyes to see — we also have an innate system that lets us perceive distance to objects, compute their size and expected weight, and pick them up with the correct pressure and strength. All this means we can grasp objects without much thought. For robots, this requires an array of algorithms, high-quality cameras, and a robotic gripper sensitive enough to adapt to different requirements. And that’s hard enough when there’s just a single item on a table, for example. Clutter the scene, requiring the robot to detect one specific item and things get complicated.

A team of researchers from Khalifa University, in collaboration with Dubai Future Labs, Strata Manufacturing and Purdue University, has developed a new approach using neuromorphic cameras and novel frameworks for robots to grasp objects in cluttered scenes. Their approach can boost production speed in automated manufacturing, offering a robust system that operates in low-light conditions too.

This is the first proposed framework for event-based robotic grasping for multiple known and unknown objects in a cluttered scene.

The KU team comprised Xiaoqian Huang, PhD candidate, Mohamad Halwani, graduate student, Abdulla Ayyad, Research Associate, Prof. Lakmal Seneviratne, Director of the Center for Autonomous Robotic Systems (KUCARS) and Dr. Yahya Zweiri, Associate Professor and Acting Director of the KU Advanced Research and Innovation Center (ARIC). They published their results in the Journal of Intelligent Manufacturing.

“Robots equipped with grippers have become increasingly popular and important for grasping tasks in the industrial field, because they provide the industry with the benefit of cutting manufacturing time while improving throughput,” Dr. Zweiri said. “Robotic vision plays a key role for perceiving the environment in grasping applications but conventional frameworks for vision suffer from motion blur and low sampling rate. In an evolving industrial landscape, this type of vision may not keep up.”

Assisted by vision, robots can perceive the surrounding environment, including the attributes and locations of their target objects. There’s more than one type of vision and systems can be categorized by the analytic and data-driven methods used to assess the geometric properties of objects. Some types of vision are known as model-based approaches, where objects are known to the robot already based on prior knowledge. Model-free methods are more flexible for both known and unknown objects as the robot learns the geometric parameters of the objects in front of it.

Traditional vision frameworks suffer many drawbacks. Standard vision sensors continue to sense and save picture data as long as the power is on, resulting in significant power consumption and the need to store large volumes of data. Motion blur and poor observation in low-light conditions are also concerns: The quality of a picture taken by a standard camera will be affected by the moving speed of a conveyor belt in a production line, for example.

“Standard cameras also commonly have a frame rate of less than 100 frames per second,” Dr. Zweiri said. “Even for high-speed frame-based cameras, the frequency is generally less than 200 frames per second. Computing the complex algorithm for vision processing will take additional time. Accelerating vision acquirement and the processing will help improve grasping efficiency.”

An event camera does not capture images using a shutter like conventional frame cameras. Instead, it is an imaging sensor that responds to local changes in brightness, with each pixel operating independently and asynchronously, reporting changes in brightness as they occur, and staying silent otherwise. Also known as neuromorphic vision sensors, they are inspired by biological systems such as fly eyes, which can sense data in parallel and asynchronously in real time.

“In comparison to traditional frame-based vision sensors, event-driven neuromorphic sensors have low latency, a high dynamic range and high temporal resolution,” Dr. Zweiri said. “Using these results in a stream of events with a microsecond-level time stamp, no motion blur, low-light operation and a faster response and higher sampling rate. However, there are few works using event cameras to address gripping challenges, such as dynamic force estimation.”

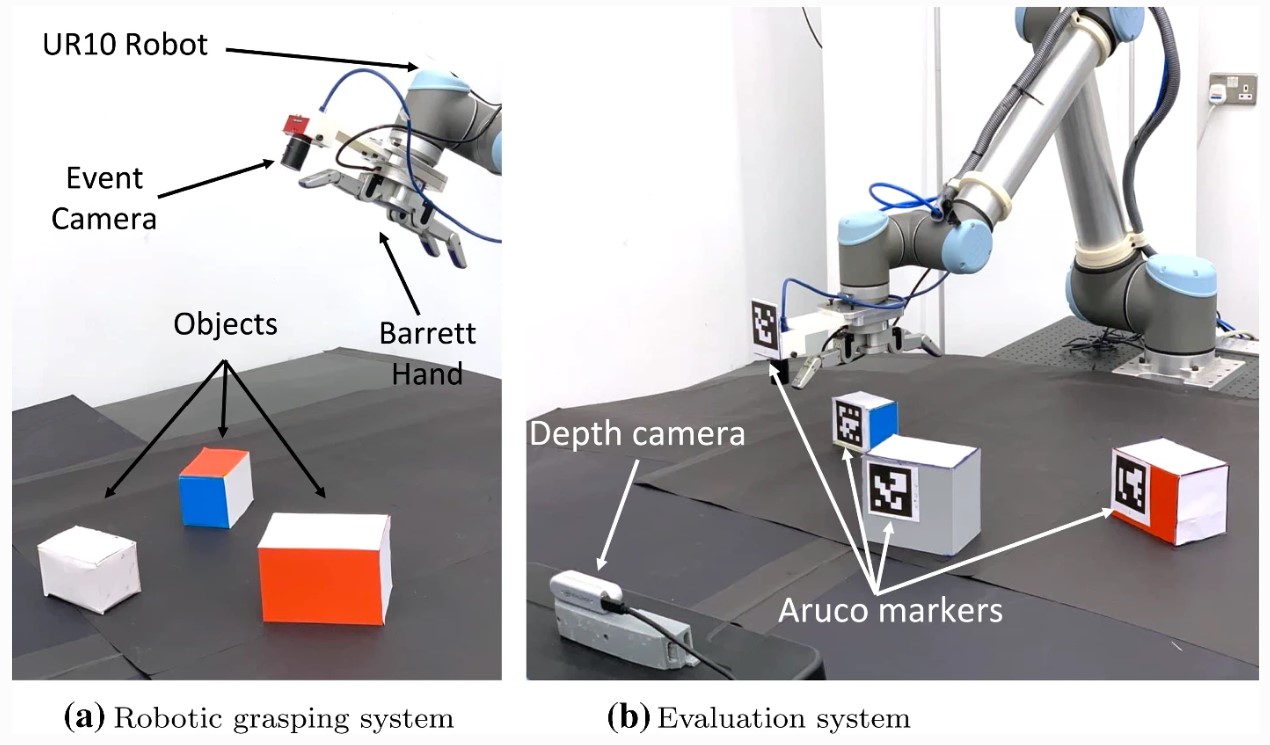

In testing their framework using the neuromorphic camera in the robotic gripper, the team ensured there were no obstacles between the objects and the gripper. The framework obtains the target’s position, classifies it, and estimates the necessary grasping pose for the gripper. They developed two approaches: one model-based and one model-free.

“The model-based approach provides a solution for grasping known objects in the environment, with prior knowledge of the object shape to be grasped,” Dr. Zweiri said. “The model-free approach can be applied to unknown objects in real time.”

Both approaches can effectively complete the grasping tasks successfully. The team found the model-based approach is slightly more accurate but it is constrained to known objects with prior knowledge of models. However, both are applicable and effective for neuromorphic vision-based grasping applications, and their application would depend on different specific scenarios. Future work will focus on more complex situations such as objects with occlusion.

Jade Sterling

Science Writer

26 January 2023