To support people suffering from paralysis, undergraduate students at Khalifa University have explored how to translate brain waves into speech by using AI, specifically deep learning techniques.

Paralysis is a serious medical condition threatening the livelihoods of approximately 5.4 million people around the world. For those suffering from full body paralysis, even speaking is an impossible task to perform, severely limiting independence.

One way to overcome this inability to communicate is by tapping into a person’s brain wave signals and translating those signals into speech. Undergraduate students at Khalifa University have explored how to translate brain waves into speech by using AI, specifically deep learning techniques.

Students in the Khalifa University Department of Electrical Engineering and Computer Science, developed an electroencephalogram (EEG) control system for disabled people to communicate with others as their Senior Design Project. The students, Ahmed Alkhateri, Mohamed Alnuaimi, Saif Alshehhi and Ahmed Alzaabi, are supervised by Dr. Kin Poon, Chief Researcher at Emirates ICT Innovation Center (EBTIC), and Dr. Leontios Hadjileontiadis, Professor of Electrical and Computer Engineering and also Acting Chair of the Department of Biomedical Engineering,

“Every day is a challenge for patients that suffer from full-body paralysis and we hope this project will ease their lives,” explained Alzaabi. “Our project uses EEG signals to implement a brain-computer interface (BCI) to help paralyzed patients communicate with other people freely.”

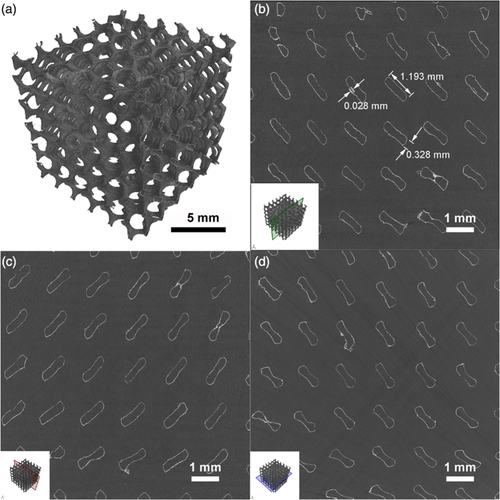

EEG represents the electrical activities of the brain with the signals captured when an individual starts thinking whilst wearing an EEG headset, which comprises multiple dry electrodes. The captured signals are then analyzed using deep learning algorithms called multi-layered Artificial Neural Networks (ANNs). In this project, the ANNs used are Convolutional Neural Networks (CNNs), a class of neural networks often used in image recognition because of their excellent compatibility with the processing of image pixel data.

For the CNN to process the data, the information extracted using the headset (in Fig. 1) is first converted into an image. EEG signals present particular wave forms (in Fig. 2) when recorded, which can be converted into a spectrogram. In this project, the person wearing the headset is presented with a grid of letters (in Fig. 3).

The grid flashes at random and a specific wave form called the P300 is recorded by the headset when the letter desired by the wearer is illuminated. A P300 shows a peak compared to baseline EEG signals and occurs approximately 300 milliseconds after the presentation of an infrequent stimulus—in this case, the flashing grid.

“Through our research, we quickly found that this idea has already been implemented, so we questioned how we could improve on it,” explained Alnuaimi. “We plan to improve by implementing a unique interface that will be more user-friendly when using the Arabic alphabet rather than English and also provide a predicted words functionality.”

The students designed a grid where every second one of the rows or columns flashes randomly. When the row or column containing the desired letter is illuminated, the P300 signal is generated. The rows and columns continue to flash until the second desired letter with the second P300 signal is generated. In this way, the algorithm can begin predicting the desired word.

The signals are converted into a spectrogram, with one spectrogram formed every second, and these images are then fed into the CNN, which can process the data it receives and determine the letter selected by the user.

“One of the main requirements of this project is to have the system work in real-time,” explained Alshehhi. “Once a letter is chosen, the program suggests some words that fit the chosen letters to ease the completion of the desired word.”

Deep learning is one of the hot topics in artificial intelligence research because it has many successful applications. Its application in recognizing brain wave signals is an up-and-coming area of research, but not without its difficulties.

“The main limitation we faced is the data size. In order to get more accurate results, we need to train the algorithm with more data,” explained Alkhateri. “For a CNN to work at an acceptable accuracy, a lot of data is required to train the system, which is time consuming. Furthermore, the device we are using has an accuracy rate of 65 percent, which, while good for its price, is still a limiting factor. Other minor limitations include the sample size we used and the processing power we have access to.”

“There are things we can do to further enhance this project,” added Alkhateri, who hopes to pursue his MSc with KU to further develop the project. “We could use a cloud service with better computational power to reduce processing time and improve performance. Users can create their own profiles online, find all their previous data and add predicted words to suit their use cases.

We could also improve the interface by allowing users to customize the interface to their needs.”

Jade Sterling

Science Writer

22 July 2020